|

Publications |

|

X. Sun, P.L. Rosin, R.R. Martin, F.C. Langbein, Fast and Effective Feature-Preserving Mesh Denoising, IEEE Trans. Visualization and Computer Graphics 13 (5), 925-938, 2007� [doi]� [pdf preprint]� [Source Code.zip]����� [Source Code.rar]�� [Windows exe.zip]���� [Windows exe.rar]

We present a simple and fast mesh denoising method, which can remove noise effectively while preserving mesh features such as sharp edges and corners. The method consists of two stages. First, noisy face normals are filtered iteratively by weighted averaging of neighboring face normals. Second, vertex positions are iteratively updated to agree with the denoised face normals. The weight function used during normal filtering is much simpler than that used in previous similar approaches, being simply a trimmed quadratic. This makes the algorithm both fast and simple to implement. Vertex position updating is based on the integration of surface normals using a least-squares error criterion. Like previous algorithms, we solve the least-squares problem by gradient descent; whereas previous methods needed user input to determine the iteration step size, we determine it automatically. In addition, we prove the convergence of the vertex position updating approach. Analysis and experiments show the advantages of our proposed method over various earlier surface denoising methods.

|

|

Funded by |

|

X. Sun, P.L. Rosin, R.R. Martin, F.C. Langbein, Random Walks for Mesh Denoising, Proc. ACM Symp. Solid and Physical Modeling SPM 2007, 11-22, ACM Siggraph 2007. ISBN1595936660� [PPT] (Extended version: X. Sun, P.L. Rosin, R.M. Martin and F.C. Langbein, Random walks for feature-preserving mesh denoising, Computer Aided Geometric Design, vol. 25, pp. 437-456, 2008. [doi]� [pdf preprint] )

This paper considers an approach to mesh denoising based on the concept of random walks. The proposed method consists of two stages: a face normal filtering procedure, followed by a vertex position updating procedure which integrates the denoised face normals in a least-squares sense. Face normal filtering is performed by weighted averaging of normals in a neighbourhood. The weights are based on the probability of arriving at a given neighbour after a random walk of a virtual particle starting at a given face of the mesh and moving a fixed number of steps. The probability of a particle stepping from its current face to a given neighboring face is determined by the angle between the two face normals, using a Gaussian distribution whose width is adaptively adjusted to enhance the feature-preserving property of the algorithm. The vertex position updating procedure uses the conjugate gradient algorithm for speed of convergence. Analysis and experiments show that random walks of different step lengths yield similar denoising results. In particular, iterative application of a one-step random walk in a progressive manner effectively preserves detailed features while denoising the mesh very well. We observe that this approach is faster than many other feature-preserving mesh denoising algorithms. |

|

Copyright Information

Papers listed above are presented to ensure timely dissemination of scholarly and technical work. Copyright and all rights therein are retained by authors or by other copyright holders. All persons copying this information are expected to adhere to the terms and constraints invoked by each author's copyright. In most cases, these works may not be reposted without the explicit permission of the copyright holder. |

|

X. Sun, P.L. Rosin, R.R. Martin, F.C. Langbein, Noise in 3D Laser Range Scanner Data, Proc. IEEE Int. Conf. Shape Modeling and Applications SMI 2008, 37-45, IEEE Computer Society, 2008. ISBN9781424422609. [PPT] (Extended Version: X. Sun, P.L. Rosin, R.M. Martin and F.C. Langbein, Noise analysis and synthesis for 3D laser depth scanners, Graphical Models, vol. 71, pp. 34-48, 2009. [doi] [pdf preprint])

This paper discusses noise in range data measured by a Konica Minolta Vivid 910 scanner. Previous papers considering denoising 3D mesh data have often used artificial data comprising Gaussian noise, which is independently distributed at each mesh point. Measurements of an accurately machined, almost planar test surface indicate that real scanner data does not have such properties. An initial characterisation of real scanner noise for this test surface shows that the errors are not quite Gaussian, and more importantly, exhibit significant short range correlation. This analysis yields a simple model for generating noise with similar characteristics. We also examine the effect of two typical mesh denoising algorithms on the real noise present in the test data. The results show that new denoising algorithms are required to effectively remove real scanner noise. |

|

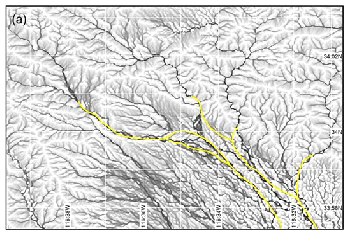

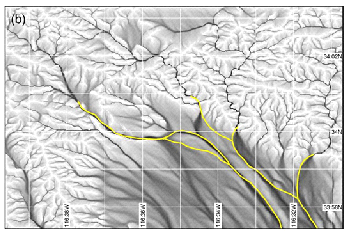

J.A. Stevenson, X. Sun and N.C. Mitchell, Despeckling SRTM and other topographic data with a denoising algorithm, Geomorphology, vol. 114, no. 3,� pp. 238-252, 2010. [doi] [Datasets used in the article] [Source Code,� more see the first paper above] [ GRASS GIS addon script: r.denoise]

Noise in topographic data obscures features and increases error in geomorphic products calculated from DEMs. DEMs produced by radar remote sensing, such as SRTM, are frequently used for geomorphological studies. They often contain speckle noise which may significantly lower the quality of geomorphometric analyses. We introduce here an algorithm that denoises three-dimensional objects while preserving sharp features. In this study the algorithm is applied to topographic data (synthetic landscapes, SRTM, TOPSAR) and the results are compared against using a mean filter, using LiDAR data as ground truth for the natural datasets. The level of denoising is controlled by two parameters: the threshold (T) that controls the sharpness of the features to be preserved, and the number of iterations (n) that controls how much the data are changed. The optimum settings depend on the nature of the topography and of the noise to be removed. If the threshold is too high, noise is preserved. A lower threshold setting is used where noise is spatially uncorrelated (e.g. TOPSAR), whereas in some other datasets (e.g. SRTM), where filtering of the data during processing has introduced spatial correlation to the noise, higher thresholds can be used. Compared to those filtered to an equivalent level with a mean filter, data smoothed by this denoising algorithm are closer to the original data and to the ground truth. Changes to the data are smaller and less correlated to topographic features. Furthermore, the feature-preserving nature of the algorithm allows significant smoothing to be applied to flat areas of topography while limiting the alterations made in mountainous regions, with clear benefits for geomorphometric analysis in areas of mixed topography. The results of denoising on the derived flow accumulation and slope maps, particularly when compared to the results of mean filtering, demonstrate the usefulness of the algorithm in fields such as hydrological modelling and landslide prediction. |

|

X. Sun, P.L. Rosin, R.R. Martin, F.C. Langbein, Bas-Relief Generation Using Adaptive Histogram, IEEE Trans. Visualization and Computer Graphics 15 (4), 642-653, 2009� [doi] [pdf preprint]

An algorithm is presented to automatically generate bas-reliefs based on adaptive histogram equalization (AHE), starting from an input height field. A mesh model may alternatively be provided, in which case a height field is first created via orthogonal or perspective projection. The height field is regularly gridded and treated as an image, enabling a modified AHE method to be used to generate a bas-relief with a user-chosen height range. We modify the original image-contrast-enhancement AHE method to use gradient weights also to enhance the shape features of the bas-relief. To effectively compress the height field, we limit the height-dependent scaling factors used to compute relative height variations in the output from height variations in the input; this prevents any height differences from having too great effect. Results of AHE over different neighborhood sizes are averaged to preserve information at different scales in the resulting bas-relief. Compared to previous approaches, the proposed algorithm is simple and yet largely preserves original shape features. Experiments show that our results are, in general, comparable to and in some cases better than the best previously published methods. |

|

input |

|

output |

|

input |

|

output |

|

input |

|

output |

|

input |

|

output |

|

scanner noise |

|

synthetic noise |